Problem

Appaca needed a reliable agent backend that could:

- Execute tools in deterministic order

- Delegate to sub-agents without infinite loops

- Provide audit trails for every decision

- Handle failures gracefully without losing context

Solution

Built a Python agent engine with three core components:

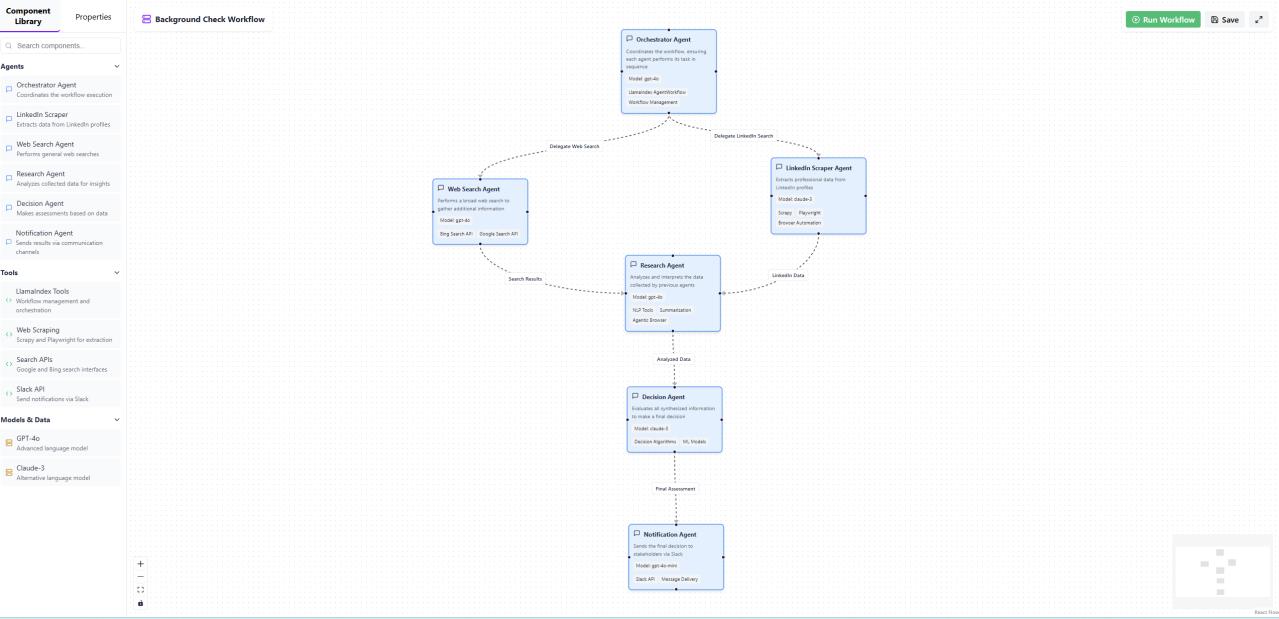

The visual workflow builder showing agent orchestration: Orchestrator → Web Search → Research → Decision → Notification agents with tools like LlamaIndex, Web Scraping, Search APIs, and Slack

The visual workflow builder showing agent orchestration: Orchestrator → Web Search → Research → Decision → Notification agents with tools like LlamaIndex, Web Scraping, Search APIs, and Slack

StepRunner Orchestrator

Sequential execution engine that processes tool calls in order, maintaining state between steps and handling retries for transient failures.

Tool Registry & Governance

Centralized registry with:

- JSON schema contracts for every tool

- Capability tags for permission filtering

- Retry policies and circuit breakers

- Allowlist enforcement

Sub-Agent Delegation

Nested agent support with safeguards:

- Maximum depth limits

- Cycle detection

- Resource budgets

- Supervisor approval gates

Architecture

┌─────────────────┐

│ Nuxt.js UI │ (Appaca proprietary)

└────────┬────────┘

│

┌────────▼────────┐

│ Node.js API │ (Appaca proprietary)

└────────┬────────┘

│

┌────────▼────────┐

│ Python Agent │ ← This project

│ Engine │

├─────────────────┤

│ - StepRunner │

│ - Tool Registry│

│ - Sub-agents │

│ - Telemetry │

└─────────────────┘

Key Decisions

Why Python over TypeScript?

The AI/ML ecosystem is Python-first. Better library support for embeddings, vector operations, and model integrations. FastAPI provides excellent async performance.

Why Sequential over Parallel?

Deterministic ordering simplifies debugging and audit trails. When tool B depends on tool A’s output, parallel execution creates race conditions. Sequential execution with explicit dependencies is more predictable.

Results

- Reduced debugging time through structured telemetry

- Zero orphaned executions via lifecycle state machine

- Audit-ready logs for compliance requirements