The problem

Building a custom Discord bot normally takes days of programming, infrastructure setup, and configuration. Most community owners have the idea but not the engineering skills or time. VibeCord closes that gap: users describe what they want in plain English, and the platform generates, tests, packages, and deploys a live bot in under 10 minutes.

The harder problem underneath that: shipping a system like this at all. AI-generated code at runtime, cloud deployment on demand, multi-tenant isolation, and a real-time feedback loop — all of this has to hold up when users start depending on it. The visible product is simple. The infrastructure underneath it isn’t.

The flow: idea to live bot in under 10 minutes

1 — Describe it

Users type what they want: “A welcome bot that greets new members.” No configuration. No code.

Users type what they want: “A welcome bot that greets new members.” No configuration. No code.

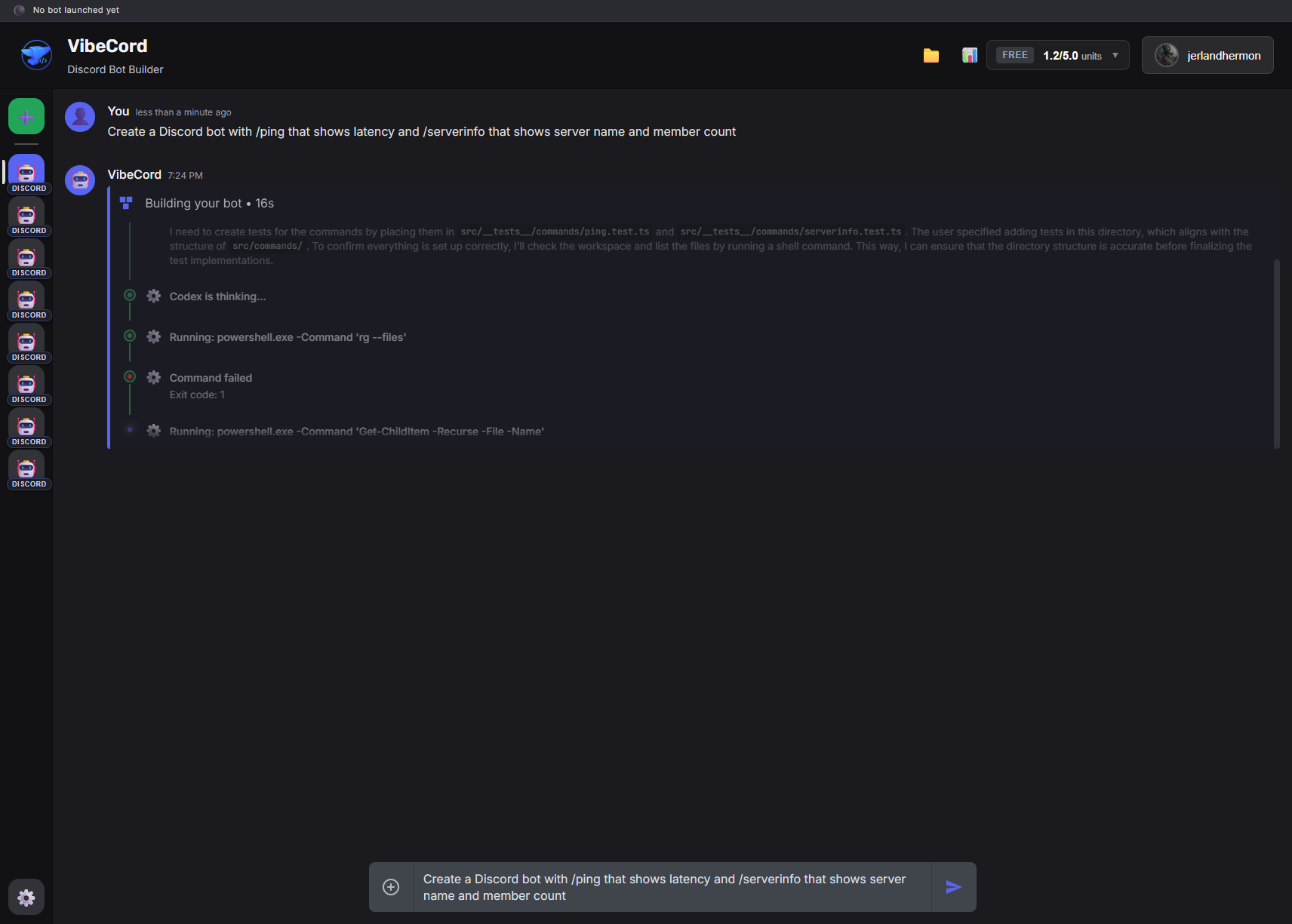

2 — Agent generates it

OpenAI Codex generates the full bot codebase in real-time — entry point, event handlers, commands, services, and tests. The user sees it happen.

OpenAI Codex generates the full bot codebase in real-time — entry point, event handlers, commands, services, and tests. The user sees it happen.

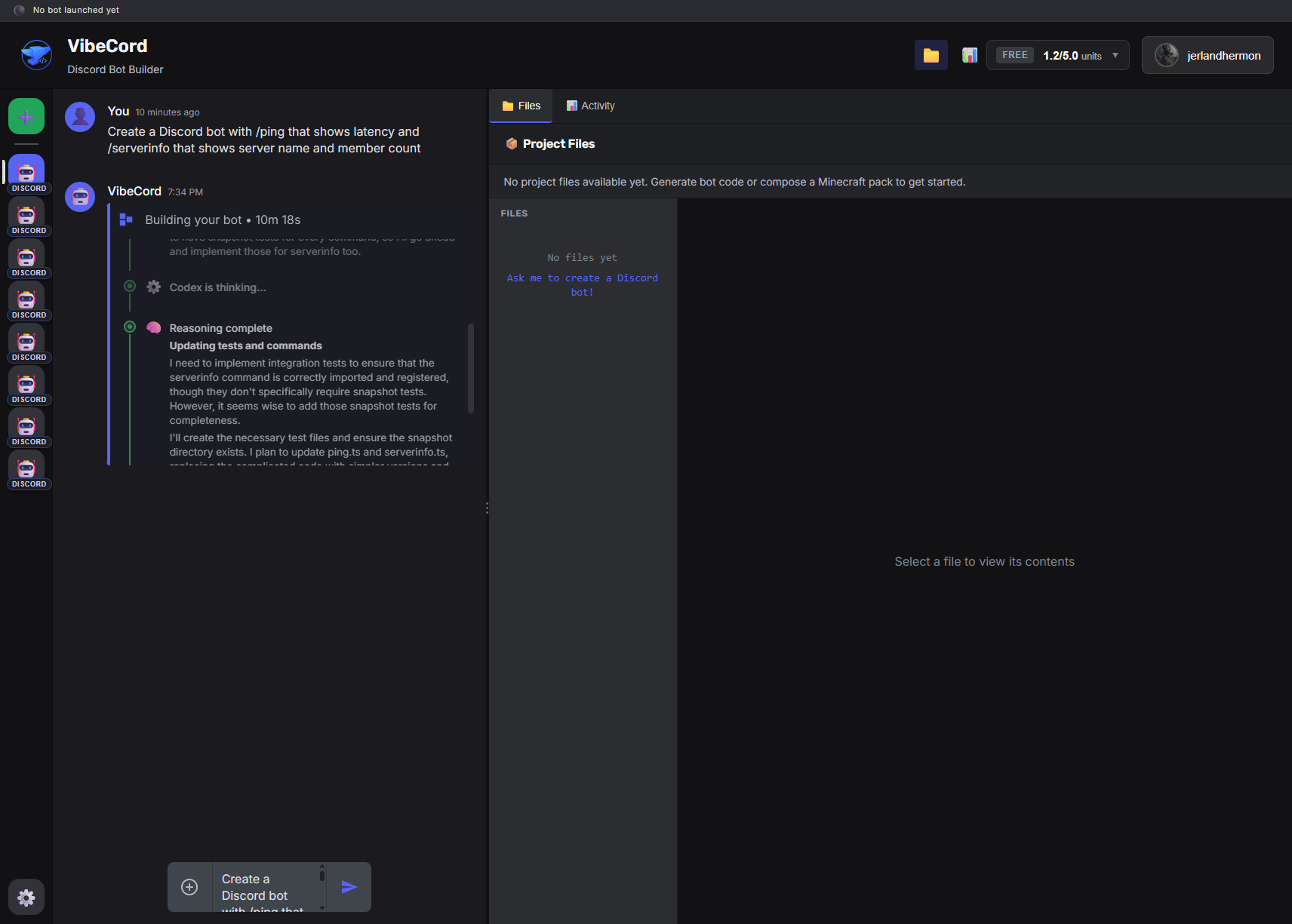

3 — Review and deploy

Full file browser with syntax highlighting. Users can inspect every generated file before deploying.

Full file browser with syntax highlighting. Users can inspect every generated file before deploying.

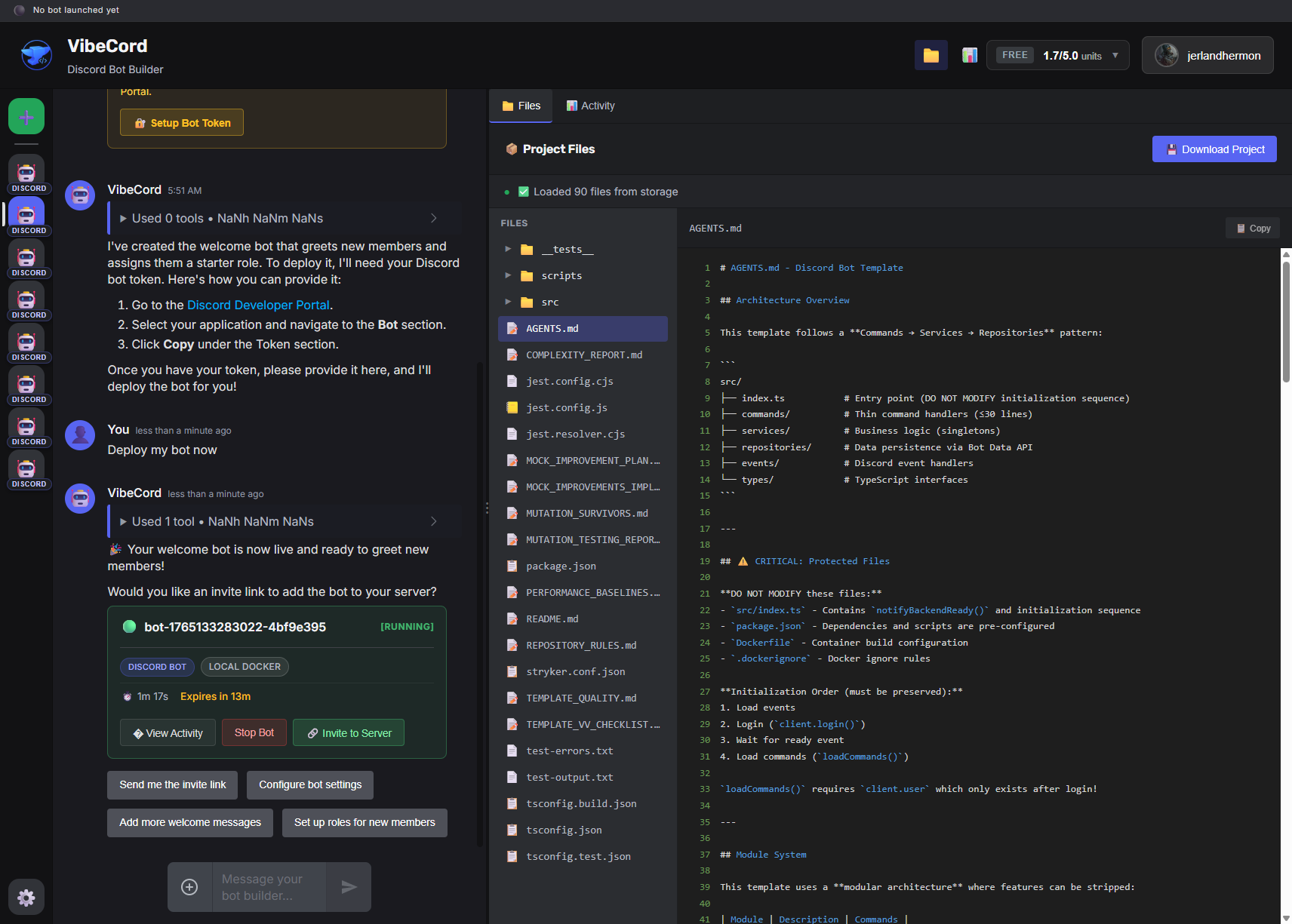

4 — Running

Bot running in an isolated AWS Fargate container. Status: live. Deploy time: 2–4 minutes for most bots.

Bot running in an isolated AWS Fargate container. Status: live. Deploy time: 2–4 minutes for most bots.

Results

1,500+ users reached the platform without paid acquisition. 2,550+ unit tests with 85%+ coverage. 62% code reduction (13k → 5k LOC) through an 8-phase deep refactor with zero breaking changes.

A typical bot goes from description to running in under 10 minutes. Initial generation latency was 30+ minutes — optimization work (model selection, prompt trimming, parallelized generation steps) brought this down to 2–4 minutes.

The 9-phase user journey (Prompt → Clarify → Plan → Preview → Confirm → Generate → Token → Deploy → Running) is verified end-to-end by golden-path tests that run on every push to main.

Architecture

The system is a TypeScript monorepo with clear domain separation:

Chat UI → Express backend (Agents + Codex) → Code artifact (S3) → AWS Fargate → Live bot

Four domain contexts with anti-corruption layers separating them: Discord Bot Builder, Platform/Infrastructure, Evaluation, and Minecraft/Luanti (feature-flagged). Each context owns its own models and terminology. A monolith backend keeps deployment simple while heavy work (bot execution) runs in isolated per-user containers.

Infrastructure provisioned via Terraform: RDS PostgreSQL, DynamoDB for high-volume ephemeral data, ECS Fargate cluster, ALB, CloudFront, S3 artifacts, AWS Secrets Manager. CI/CD via GitHub Actions with staged gates — unit tests on every push, OpenAI Evals gated to main only (cost control), post-deploy smoke test with auto-rollback in under 2 minutes.

What broke and what I learned

The system shipped too fast before the governance layer caught up. Three things that went wrong:

1. Monolithic architecture under real user load. Domain boundaries existed in code but weren’t enforced. New features leaked across contexts, making the codebase increasingly coupled. Fix: introduced anti-corruption layers and strict domain separation during the Jan 2026 refactor. This is what the 62% code reduction came from — not deletion, but clarity.

2. No audit trail for AI actions. Users couldn’t understand why the bot did what it did. When something went wrong, there was no log of what the agent decided. Fix: added structured logging to every agent step, streamed live via SSE to the UI. The Unified Timeline shows every phase, decision, and error in real-time.

3. Deploy complexity outpaced governance. The CI/CD pipeline was too fragile when multiple workflows ran in parallel. One failing test could silently skip others. Fix: consolidated to a single ordered pipeline, introduced path filtering to skip irrelevant test suites, made rollback automatic.

The underlying lesson: The limiting factor is never the AI. It is the surrounding system’s ability to make AI actions explainable, recoverable, and operationally sane.

This is why Portarium exists. The patterns I learned fixing VibeCord — validation at every boundary, approvals before irreversible actions, full audit trails — became the architecture behind the consulting work.